It is used in a combination known as ELK stack which stands for Elasticsearch, Logstash, and Kibana.Īmazon Elasticsearch is a fully-managed scalable service provided by Amazon that is easy to deploy, operate on the cloud. Get your free trial right away! Introduction to Amazon Elasticsearch Image SourceĮlasticsearch is an open-source platform used for log analytics, application monitoring, indexing, text-search, and many more. Solve your data replication problems with Hevo’s reliable, no-code, automated pipelines with 150+ connectors. To learn more about Amazon S3, visit here. You can use Amazon S3 with almost all of the leading ETL tools and programming languages to read, write, and transform the data. which can interact with S3 using its API.Īmazon S3 allows users to manage the data securely, and it also offers periodic backup and versioning of the data. It also has exceptional support for the leading programming languages such as Python, Scala, Java, etc. Amazon S3 is a popular choice all around the world due to its exceptional features.Īmazon S3 has a simple UI that allows you to upload, modify, view, and manage the data. Amazon S3 also provides high data availability, and it claims to be 99.999999999% of data durability. Amazon S3 provides a scalable and secure data storage service that can be used by customers and industries of all sizes to store any data format like weblogs, application files, backups, codes, documents, etc.

Basic understanding of data and data flow.Īmazon Simple Storage Service, which is commonly known as Amazon S3, is an object storage service offered by Amazon to store the data.To transfer data from S3 to Elasticsearch, you must have: Read along to understand these steps and their benefits in detail! Prerequisites This way we can see how severe a log entry was and what server it originated from.In this blog post, you will learn about S3 and AWS Elasticsearch, its feature, and 3 easy steps to move the data from AWS S3 to Elasticsearch. In the log columns configuration we also added the log.level and agent.hostname columns. The indices that match this wildcard will be parsed for logs by Kibana. Check that the log indices contain the filebeat-* wildcard.

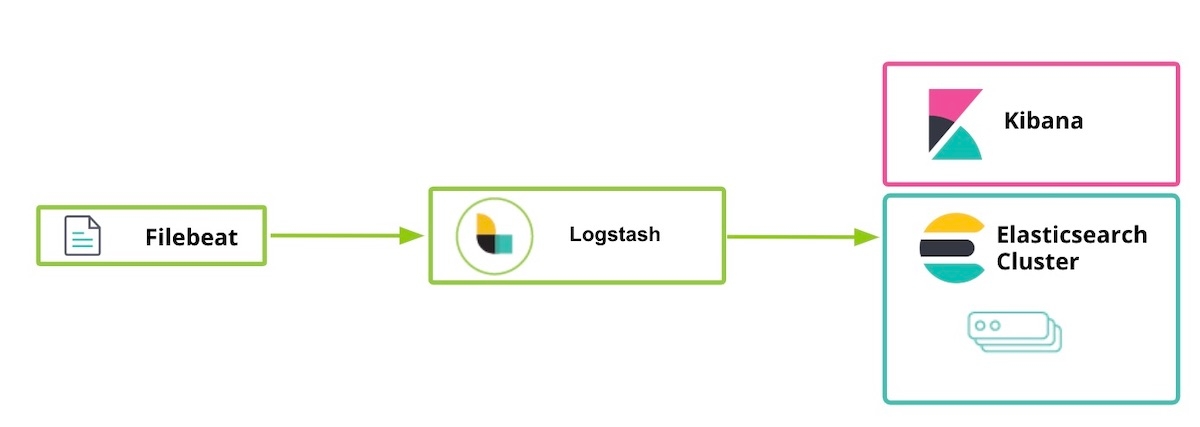

This can be configured from the Kibana UI by going to the settings panel in Oberserveability -> Logs. ERROR : something went wrongįilebeat (and ElasticSearch's ingress) need a more structured logging format like this: logging : files : rotateeverybytes : 10485760įinally, the last thing left to do is configuring Kibana to read the Filebeat logs.

By default, the Laravel logging format looks like this: local. Using Filebeat, logs are getting send in bulk, and we don't have to sacrifice any resources in the Flare app, neat! Integration in Laravel This happens in a separate process so it doesn't impact the Flare Laravel application. It's a tool by ElasticSearch that runs on your servers and periodically sends log files to ElasticSearch. Every time something gets logged within Flare, we would need to send a separate request to our ElasticSearch cluster, which could happend hundreds of times per second. However, this synchronous API call would make the Flare API really slow. When something is logged in our Flare API, we could immediately send that log message to ElasticSearch using the API. It can also show you logs that are sent to ElasticSearch as part of the ELK stack. This isn't only used to manage the ElasticSearch cluster and its contents. It's rather straightforward use it too search our logging output too.ĮlasticSearch provides an excellent web client called Kibana. We decided to not use these services because we already are using an ElasticSearch cluster to handle searching errors. They provide a UI for everything you send to them. There are a couple of services out there to which you can send all the logging output. In this blog post, we'll explain how we combine these logs in a single stream. The only problem is that, whenever something goes wrong, we need to manually log in to each server via SSH to check the logs. This is quite helpful when something goes wrong. Finally, there are worker servers which will process these reports and run background tasks like sending notifications and so on.Įach one of these servers runs a Laravel installation that produce interesting metrics and logs. Reporting servers will take dozens of error reports per second from our clients and store them for later processing. We've got web servers that serve the Flare app and other public pages like this blog. Flare runs on a few different servers and each one of them has its own purpose.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed